Jacob Waites

I design, prototype, and build brands and interactions full of expressive type, fun motion, bold colors, and minimal line-work. Sometimes I write and speak about design. My craft is the way I speak to the world, I work to help others find their voice and make them successful.

Pillpack People

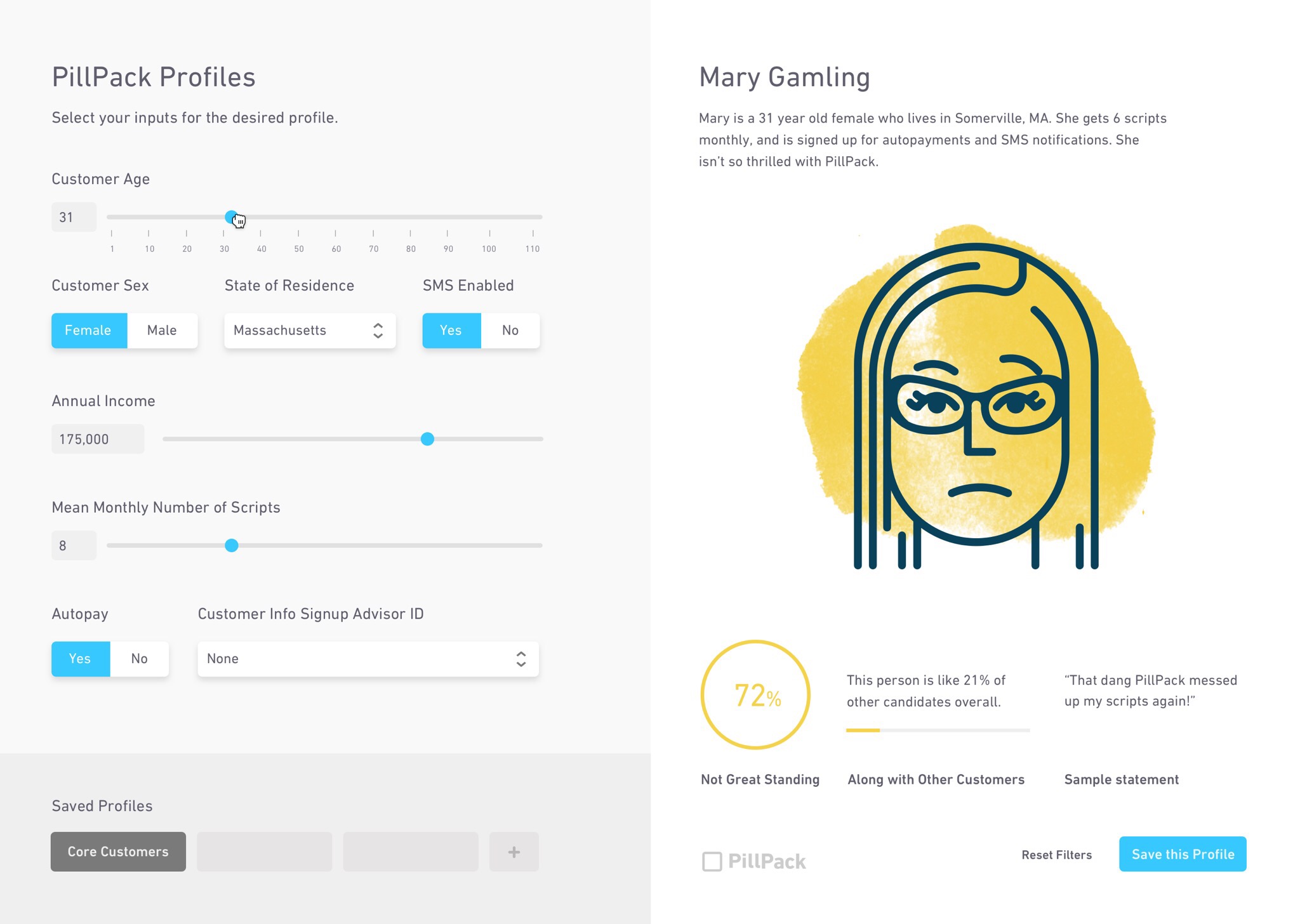

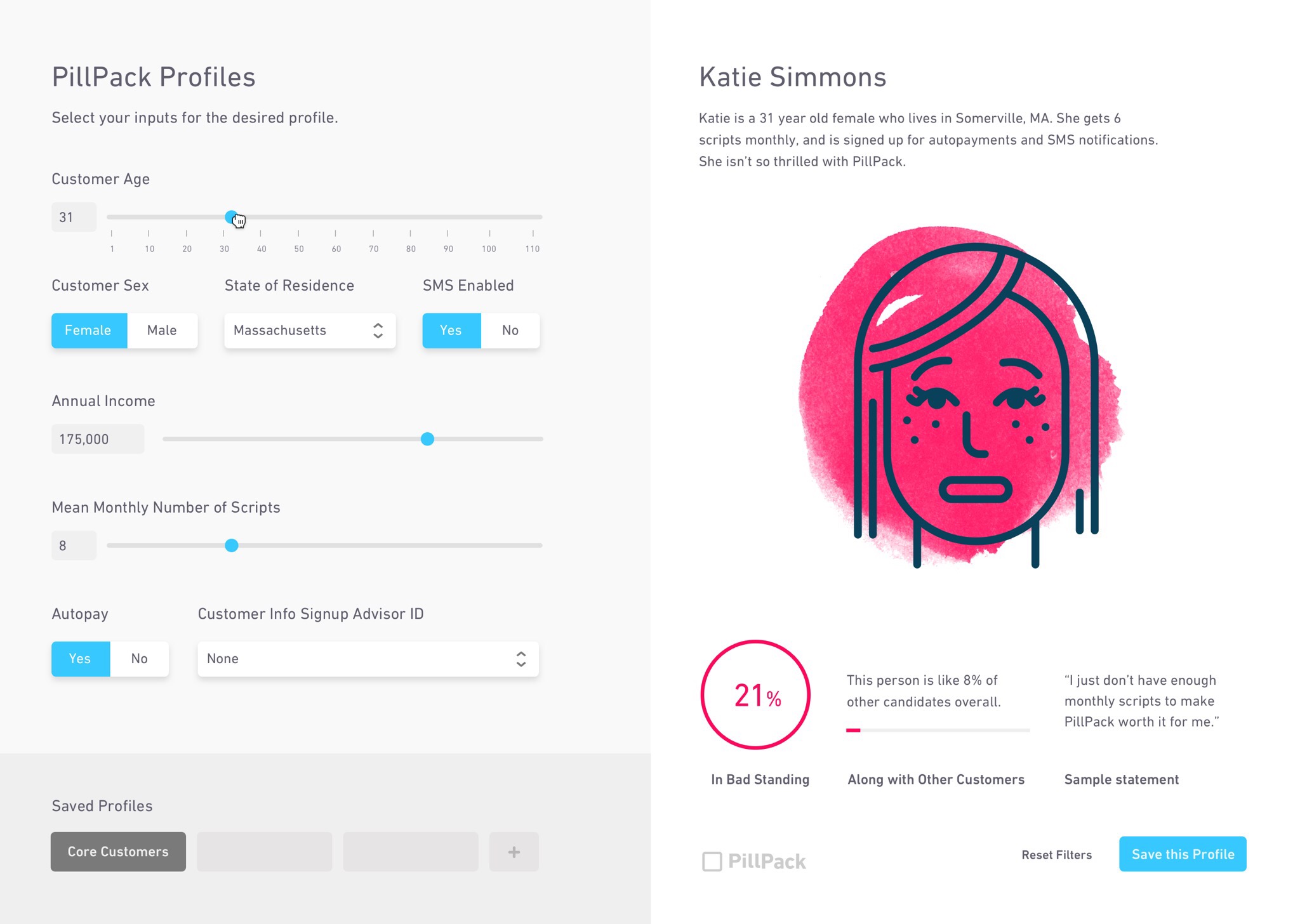

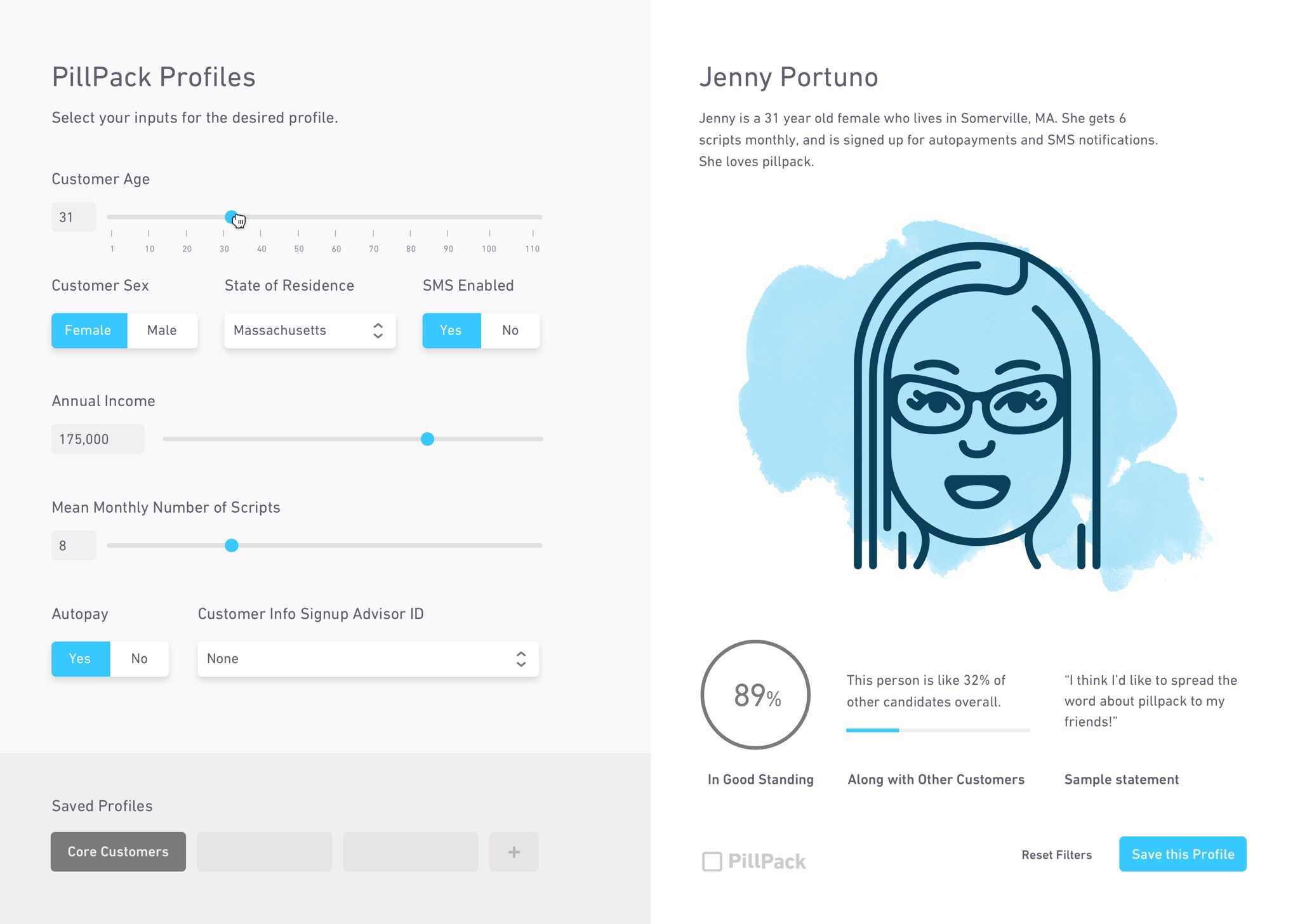

Pillpack people is a prototype using customer service feedback data to predict the likelihood that a certain customer might choose to leave the Pillpack service. It empowers customer service call center employees by augmenting their intelligence to craft the conversation they're having with an individual in real time.

The Prototype

Part of an augmented intelligence sprint at CoLab, Pillpack people was developed by Reid Williams and Parker Woodworth, with me leading visual and interaction design on the project. I created live code sketches of different interactions and visualizations created by the data that were implemented in the final prototype by Parker.

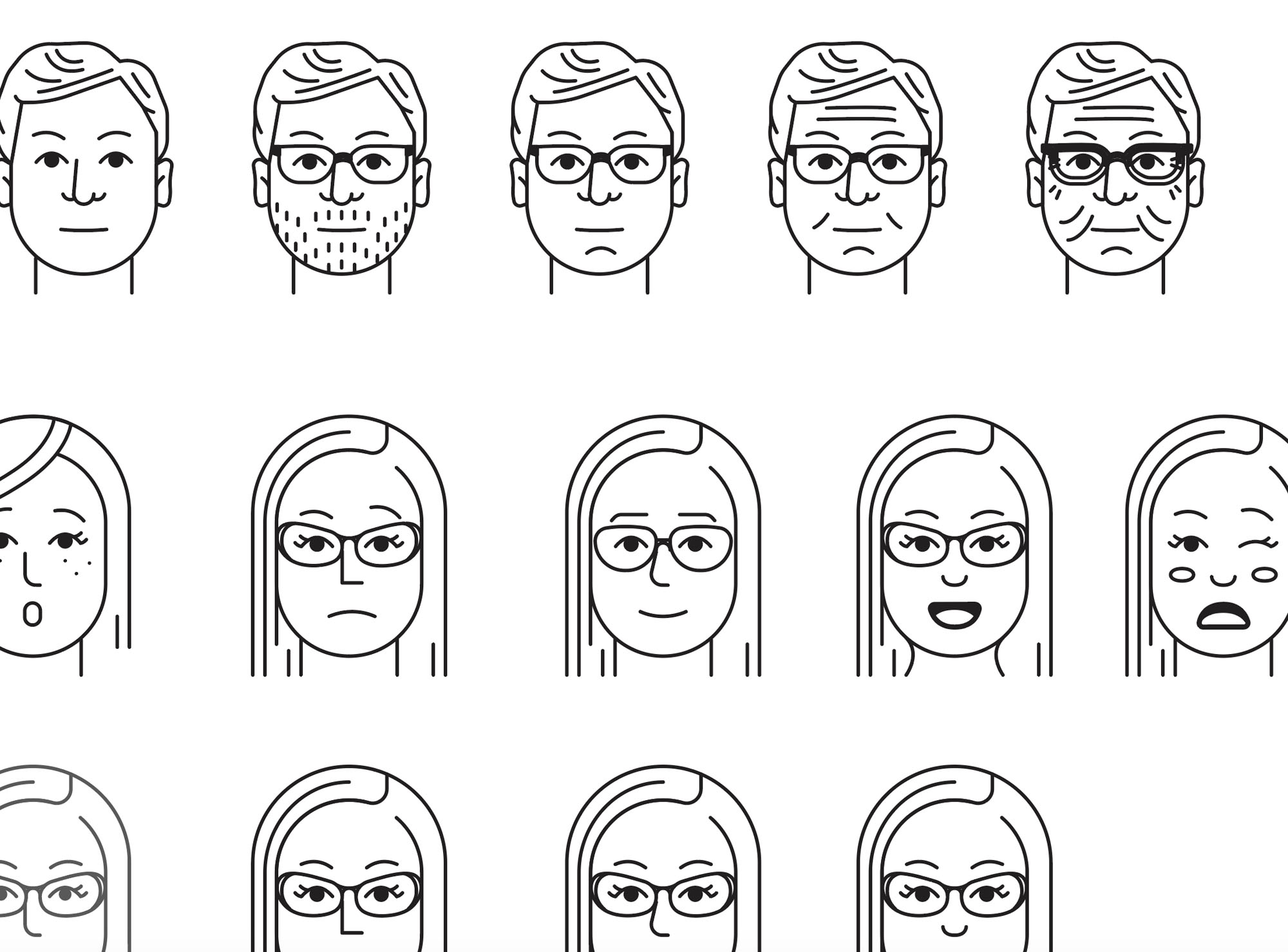

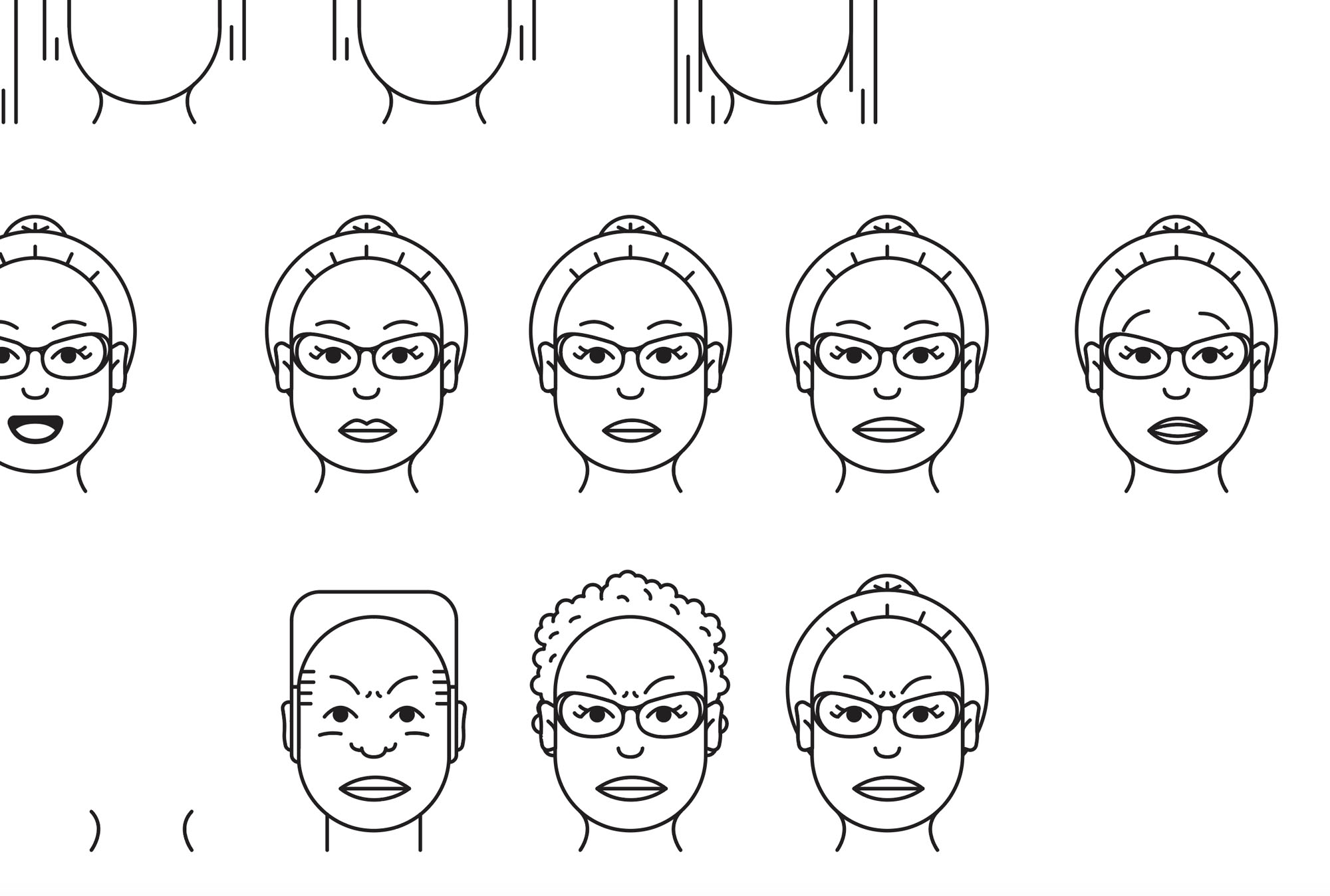

During the project, we found that patterns surfaced in the anonymized data that were very similar to user personas, we wanted a way to visualize these people to create a sense of empathy for a person with these trait sets while preserving the privacy of the customers in the data. As a user updates sliders to input traits about the user on the left side of the application, the prototype generates a face for the user that has those traits. It also generates a bio for the customer using a name generator algorithm with a small back story and location based on broader user groups.

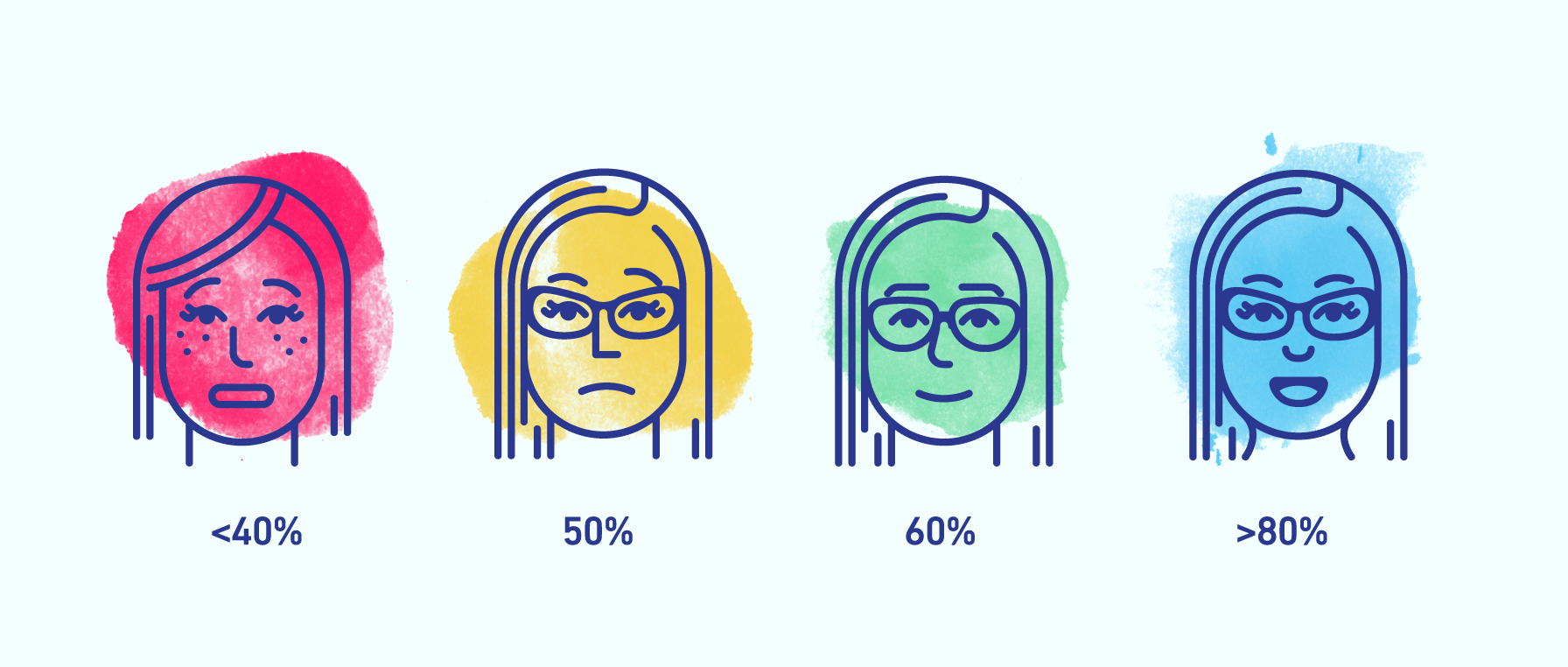

The prototype seeks to provide at a glance feedback to communicate the emotions that the person on the other end of the phone might be feeling. As a result, the facial expressions in the generated face can range from happy faces and laughter to anger or shouting. behind each user is a unique watercolor splash that changes color and shape whenever a new face is generated. The color of the shape changes to a warmer color for anger, or a cooler color for contentment. In this way, a customer’s mood can be communicated very quickly using minimal text.

Prototype Video

Visual System

The system uses over 100+ svg shapes that I custom illustrated on a vertical and horizontal grid system. There are sets of face shapes, eye shapes, brows, noses, mouths, and facial hair that are all weighted differently in a randomization algorithm to generate a new face each time a slider bar is changed.

Watercolor animation

Watercolor washes are dynamically changed and animated in an out with a masked png animation and an svg filter for color interpolation. Below is my proof-of-concept dynamic svg filter using a masked css animation to animate the watercolor droplet in and out when data is changed, later the water-color splash would change color and shape on every update.